The soaring scale of NVIDIA GTC alongside AI’s hyperscaling era:

Attendance surged at the Super Bowl of IT this year, reflecting NVIDIA’s expanding market influence and the global pull of American AI infrastructure.

If you want a clear indicator of how central information technology — and AI in particular — has become to the global economy, look no further than NVIDIA GTC. In 2025, roughly 20,000 attendees packed into San Jose’s SAP Center. A year later, the conference drew nearly 35,000 in-person attendees and 187,000 virtual registrations. For Huang’s keynote, scheduled for 11 a.m. on Monday, March 16, attendees began lining up outside as early as 6:30 that morning.

Once a gathering for developers and even hobbyist gamers, NVIDIA GTC has evolved to include the who’s who of modern computing. This year, NVIDIA CEO Jensen Huang was joined by industry leaders like Michael Dell, HPE President and CEO Antonio Neri, and Perplexity CEO Aravind Srinivas. U.S. Secretary of Commerce Howard Lutnick’s attendance reinforced the increasingly close link between the American AI and national economic and industrial strategy.

Here are our top three takeaways from Huang’s keynote, along with what IT leaders should keep in mind as they make infrastructure investment decisions in the year ahead.

3 takeaways from NVIDIA GTC keynote address

Together, these three insights capture the direction of travel for infrastructure investment.

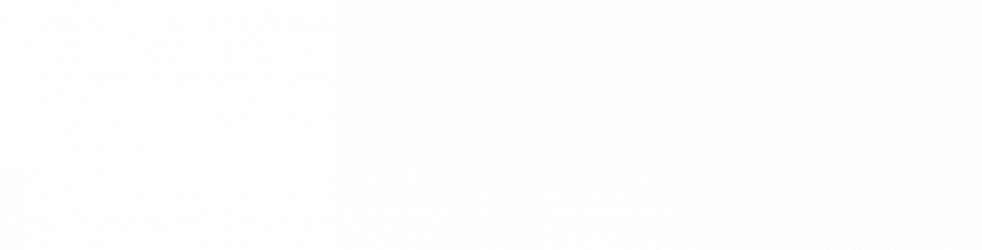

1. Tokens and inference are redefining the scale of AI workloads

Huang highlighted the industry’s shift toward agentic AI systems — machines that don’t just respond to prompts but generate code, create their own interactivity, and solve problems with increasing autonomy. Those capabilities dramatically expand the volume of inference required to support deployed AI systems, pushing inference workloads nearly 100,000 times higher than they were just a few years ago. In turn, that surge is placing significant strain on high-bandwidth memory at the upper end of the stack, creating downstream risk for the memory supply chain.

What this means for infrastructure investment

Infrastructure planning can no longer treat inference as a secondary workload. Memory architecture, bandwidth, and supply resilience now sit at the center of AI infrastructure strategy. In practice, the organizations pulling ahead are the ones leaning heavily on token throughput and efficiency, not just raw compute.

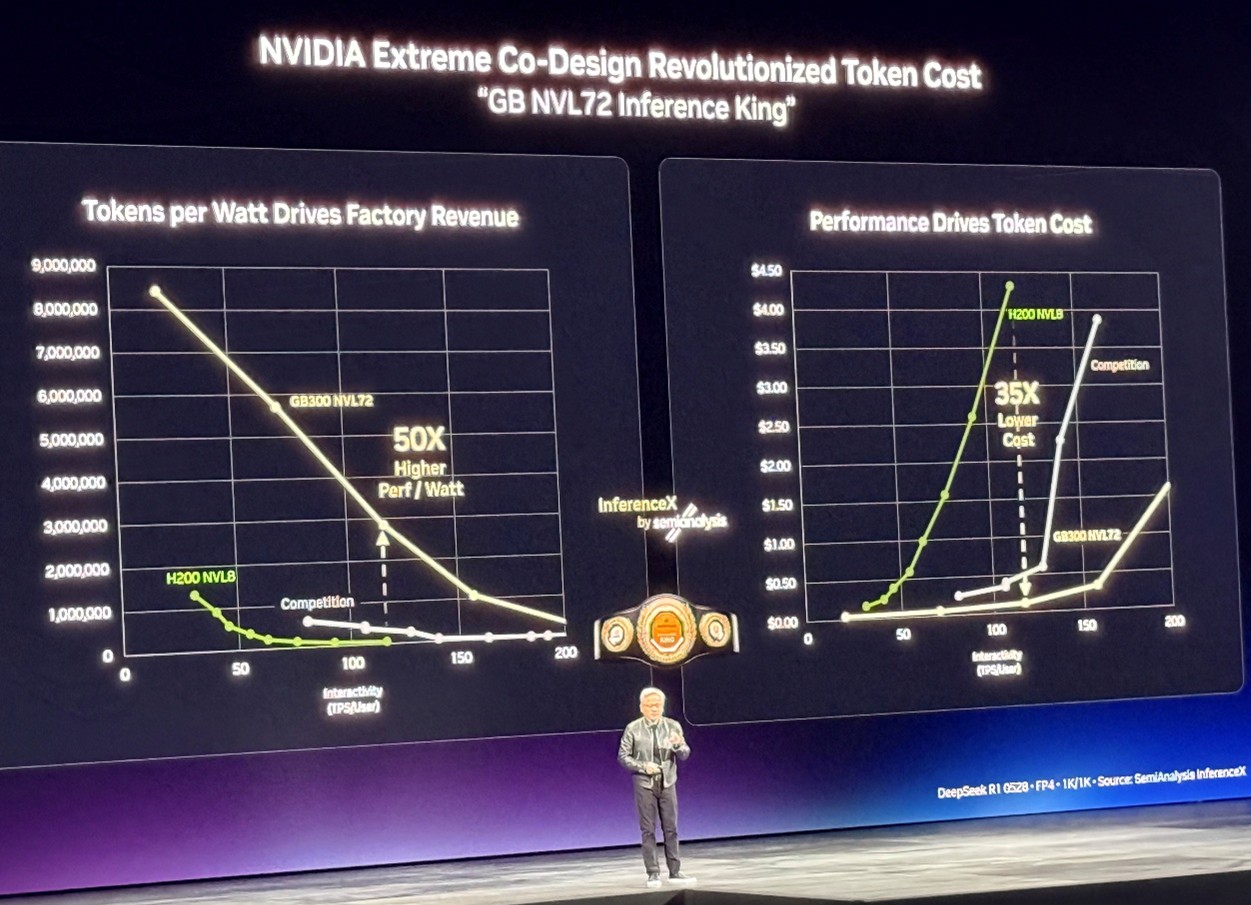

2. The NVIDIA Vera Rubin platform (and seven new chips) extends NVIDIA’s moat

Huang’s next message was about NVIDIA’s response to demand shock by shifting from standalone components to platform-scale systems. Here, Huang unveiled the NVIDIA Vera Rubin platform as a coordinated architecture made up of seven chips designed to operate together as one AI supercomputer to scale the world’s largest AI factories. As Huang put it, “Vera Rubin is a generational leap — seven breakthrough chips, five racks, one giant supercomputer — built to power every phase of AI.” The Groq 3 LPX was positioned as the inference accelerator for Vera Rubin, reflecting how increasingly AI systems now depend on low-latency, large-context inference built into the platform layer.

What this means for infrastructure investment

As inference takes on a larger, more latency-sensitive role, architecture decisions will increasingly be made at the level of integrated systems (and the software stack that runs them), not just individual servers or accelerators. Leaders can expect the market to move toward platform- and rack-scale building blocks going forward.

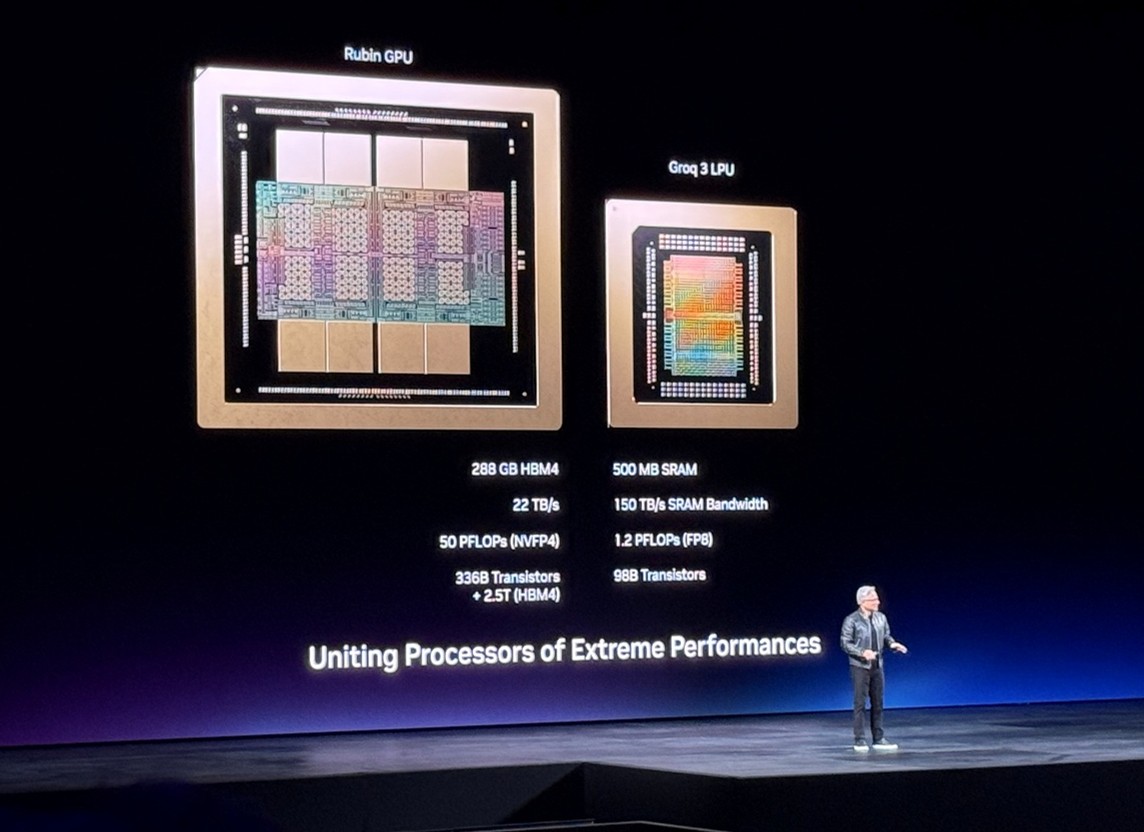

3. NVIDIA NemoClaw™ signals NVIDIA’s move into the control layer for agentic AI

If takeaways one and two describe the physics of hyperscaling — tokens driving inference demand, and platforms evolving into rack-scale AI factories — NVIDIA’s third message focuses on governance. Specifically, it centers on the layer that determines how those systems operate in practice. At GTC, Huang introduced NVIDIA NemoClaw™, an open-source stack designed to add privacy and security controls to the OpenClaw agent platform. Creator of OpenClaw Peter Steinberger said, “With NVIDIA and the broader ecosystem, we’re building the claws and guardrails that let anyone create powerful, secure AI assistants.” With this, NVIDIA appears to be positioning the agent layer as something that must be governed like infrastructure, rather than treated as an application add-on.

What this means for infrastructure investment

Infrastructure leaders should expect the buying criteria to expand beyond computing and memory into control-plane capabilities. NemoClaw’s introduction indicates that the market treats agent operations as part of the AI stack itself, another layer that must scale as token volumes grow.

How SHI can help

While an appetite for AI-informed organizational transformation is important, AI ambition alone does not lead to scaled, production-ready outcomes.

SHI was named the 2026 NVIDIA Partner Network Rising Star Solution Provider Partner of the Year at this year’s NVIDIA GTC.

The deep partnership between SHI and NVIDIA enables organizations to:

- Scale from pilots to production-grade AI environments across workstations, data centers, and hybrid cloud architectures, built on NVIDIA-validated platforms and deployed with SHI’s infrastructure and integration expertise.

- Operationalize generative and agentic AI by aligning model strategy and deployment approach — whether open or proprietary, hosted or private — to the infrastructure layer beneath it, while accelerating adoption through modular, cloud-native frameworks.

- Deliver AI initiatives end to end, combining use-case development, workload migration, infrastructure integration, and rack-level design into a coordinated, enterprise-ready deployment motion.

In the next article on this topic, we’ll explore how NVIDIA’s multi-layered approach across energy, chips, infrastructure, models, and applications aligns to SHI services at each layer.

NEXT STEPS

Ready to grow your AI capabilities without outgrowing your infrastructure? Contact our team to learn how our NVIDIA-powered solutions and AI & Cyber Labs can help you make confident, strategic infrastructure investments.